Abusing pipelines to hijack production

- published

- reading time

- 6 minutes

On a recent engagement, the customer wanted to test how an attacker that successfully compromised external developer accounts could reach production resources. Companies inviting external consultants into their CI/CD and development services (Like Azure DevOps) is something I see a lot and have experienced from being a developer myself.

Let’s explore how an attacker with developer access can abuse a DevOps pipeline to dump accessible Azure KeyVaults via a “Service Connection”, as well as exfiltrate the loot using the secrets retrieved.

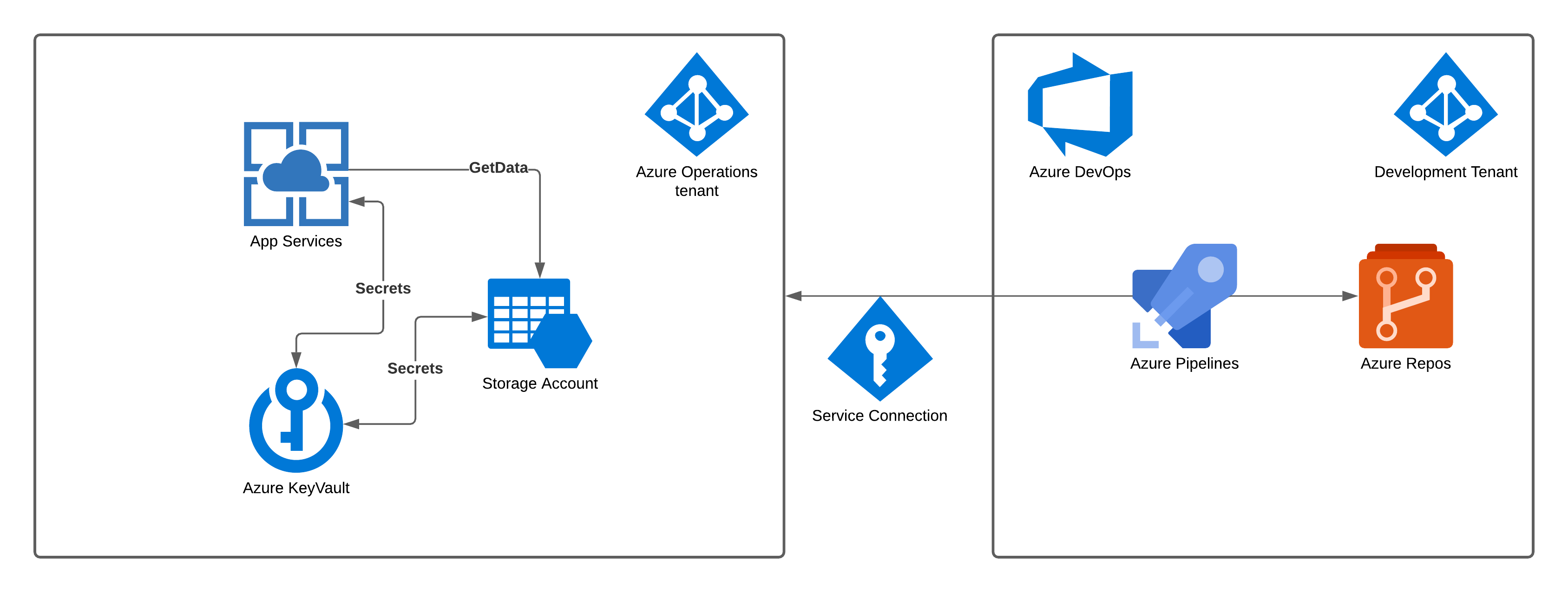

Consider the following example environment.

Let’s assume that the development team and its user accounts belong to a dedicated Azure AD tenant. This tenant is separate from the one that developers want to deploy Azure services onto. The Azure environment tenant contains an App Service pulling data from a Storage Account and accessing needed secrets/keys from an Azure KeyVault service. To give the Azure DevOps pipelines created by the development team access to the particular tenant (so that resources can be updated/deploy) an “Azure Service Connection” is created.

An “Azure Service Connection” allows Agents/Tasks/Jobs within all or specific DevOps pipelines to access a scoped portion of an Azure tenant. The scope can be select in various formats, but typically, it’s a dedicated Azure “Subscription” that reflect different environments. (Development, Testing, Production etc..)

The “Azure Service Connection” can either be Service principal (Automatic or manual setup), Managed identity or Publish Profile. “Automatic Service principal” is the easiest one to set up, which encourages me to say that it’s the one most commonly used (Really just guessing here). You can read more about Service Connection and scope within Azure DevOps HERE

Using an Azure Service Connection to give an Azure DevOps Pipeline access to the environment itself is not “wrong” by any means and can be practiced safely when properly configured. However, when you add poor/rushed identity and access management, it can quickly get dangerous.

In short, giving a DevOps pipeline access to the production subscription via a Service connection is the same as providing all developers who can edit that pipeline CLI access to the production environment. The issue with “Everyone” being able to edit “Everything” within DevOps is a common misconfiguration that I see all the time, so I decided to put something together to showcase how dangerous this can be!

Azure KeyVault

A fundamental resource in many modern Azure solutions of today is Azure KeyVault. KeyVault gives developers and other resources a safe location to store secrets and certificates easily. ClientId, ClientSecret, ConnectionString, PGP Keys, put it all in the vault!

KeyVault can integrate rather automagically with other Azure services as well as directly from an Azure DevOps pipeline. The latter use case is for storing sensitive application variables that need to be accessed during deployment. For this very reason, it is a prime target when attacking an Azure environment. In our example scenario, Azure KeyVault keeps the connection string our AppService needs to integrate with our storage account. If we could somehow dump this secret, we can use a tool like “Microsoft Azure Storage Explorer” to directly connect to the storage account and further exfiltrate possible sensitive information it stores.

Pipeline Abuse

The payload we are looking to deploy into our pipeline is a modified version of PowerZure Get-AzureKeyVaultContent and Show-AzureKeyVaultContent methods (Awesome stuff hausec!). It will attempt to enumerate the accessible subscription, looking for Azure KeyVaults. When found, it will try to connect and dump all the delicious secrets.

If you are editing /abusing an existing pipeline, find one that looks like it has something to do with production, click edit and start from step five.

-

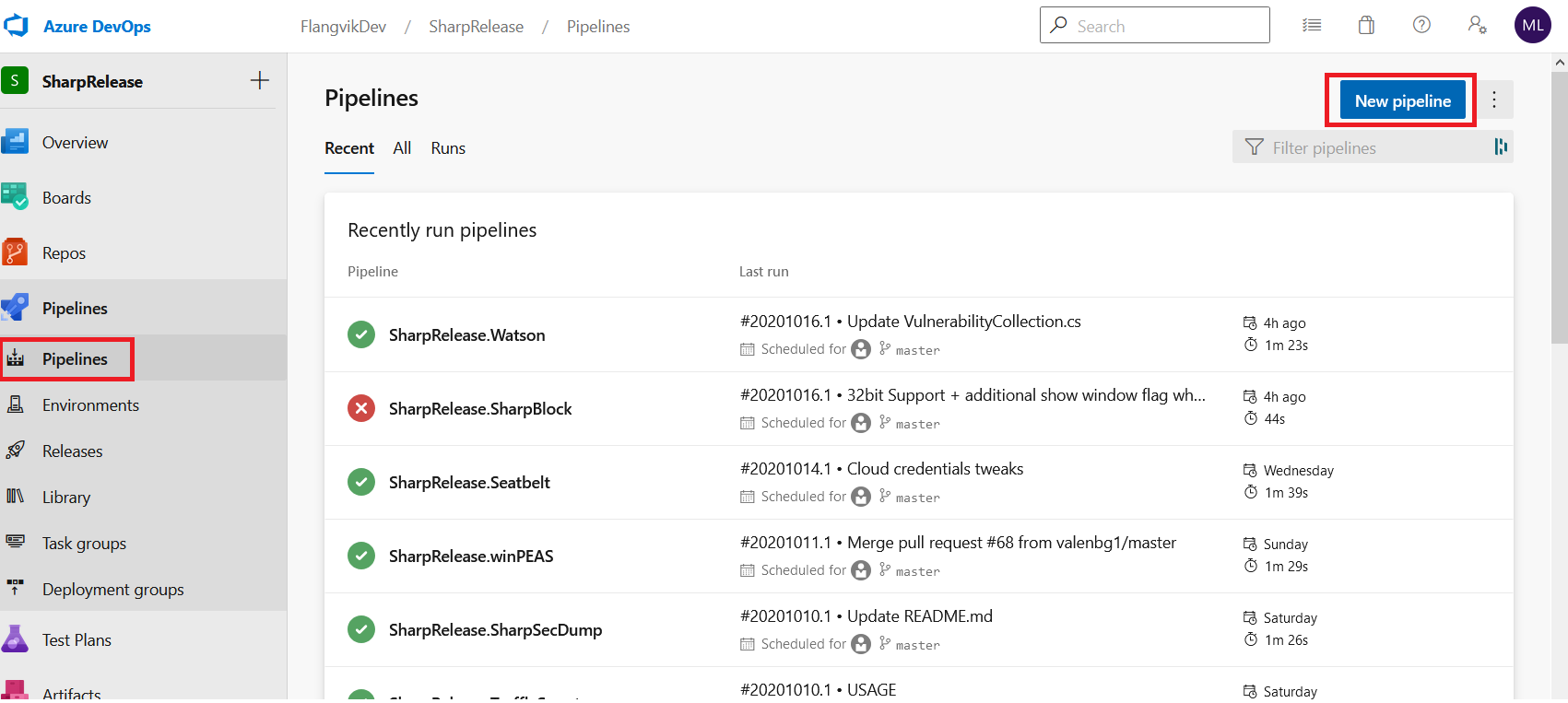

Access DevOps and create a pipeline from the release menu.

-

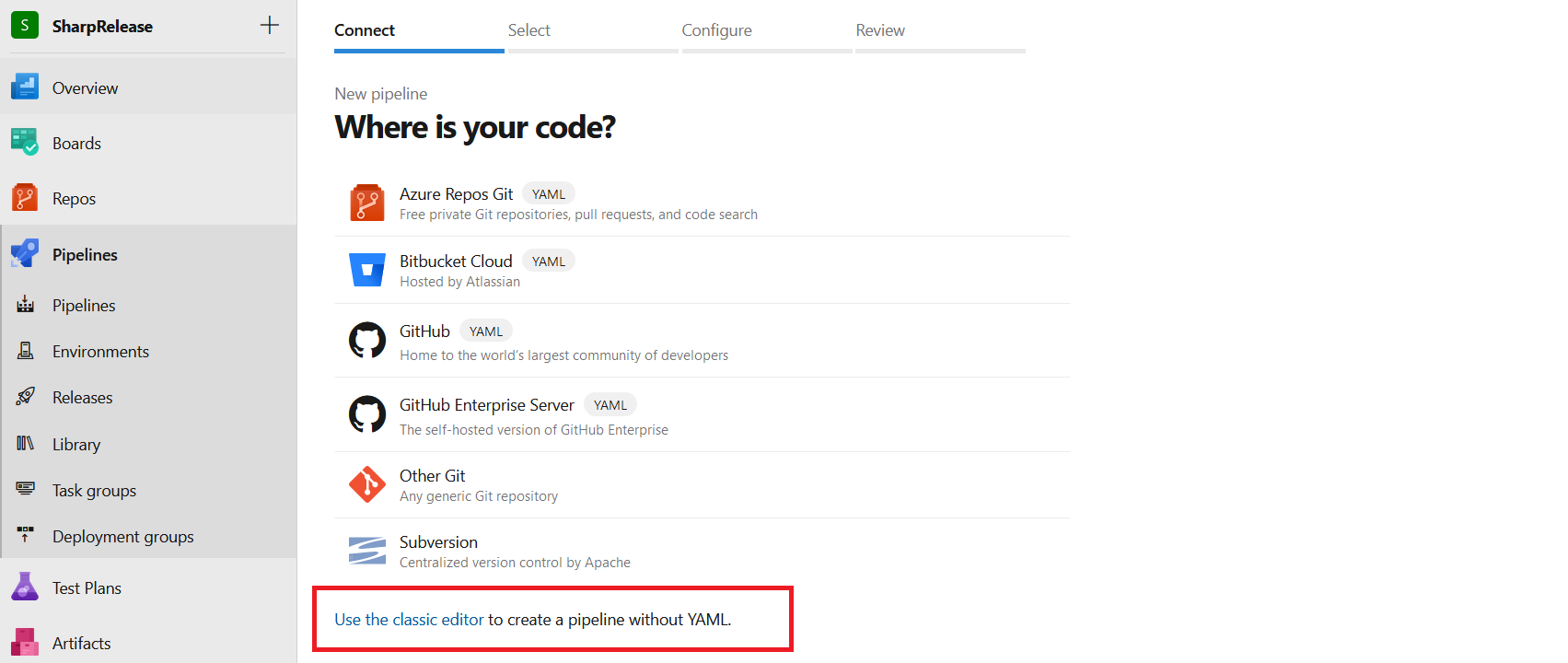

At the bottom, choose “Use the classic editor.”

-

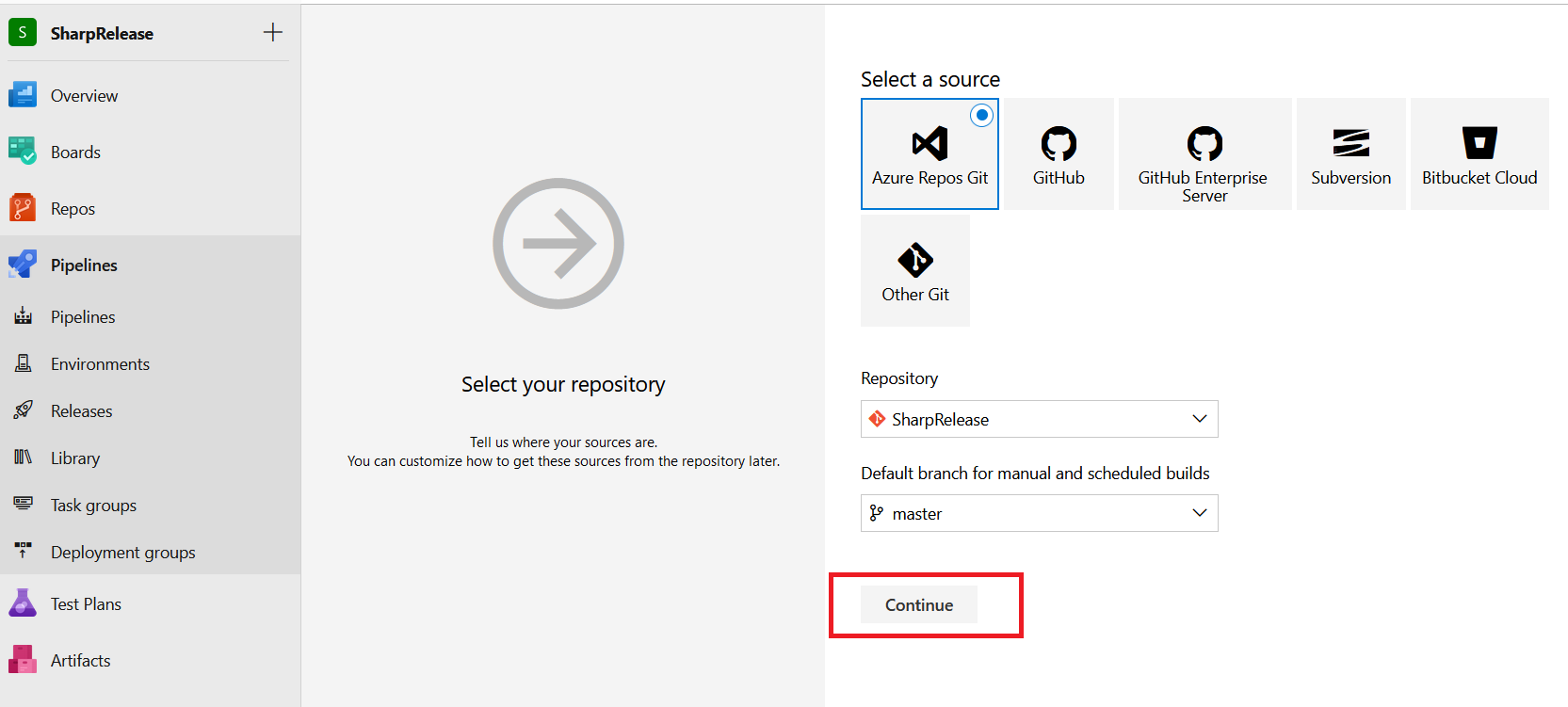

In this case, we will select the already existing Azure Repo. Click “Continue”.

-

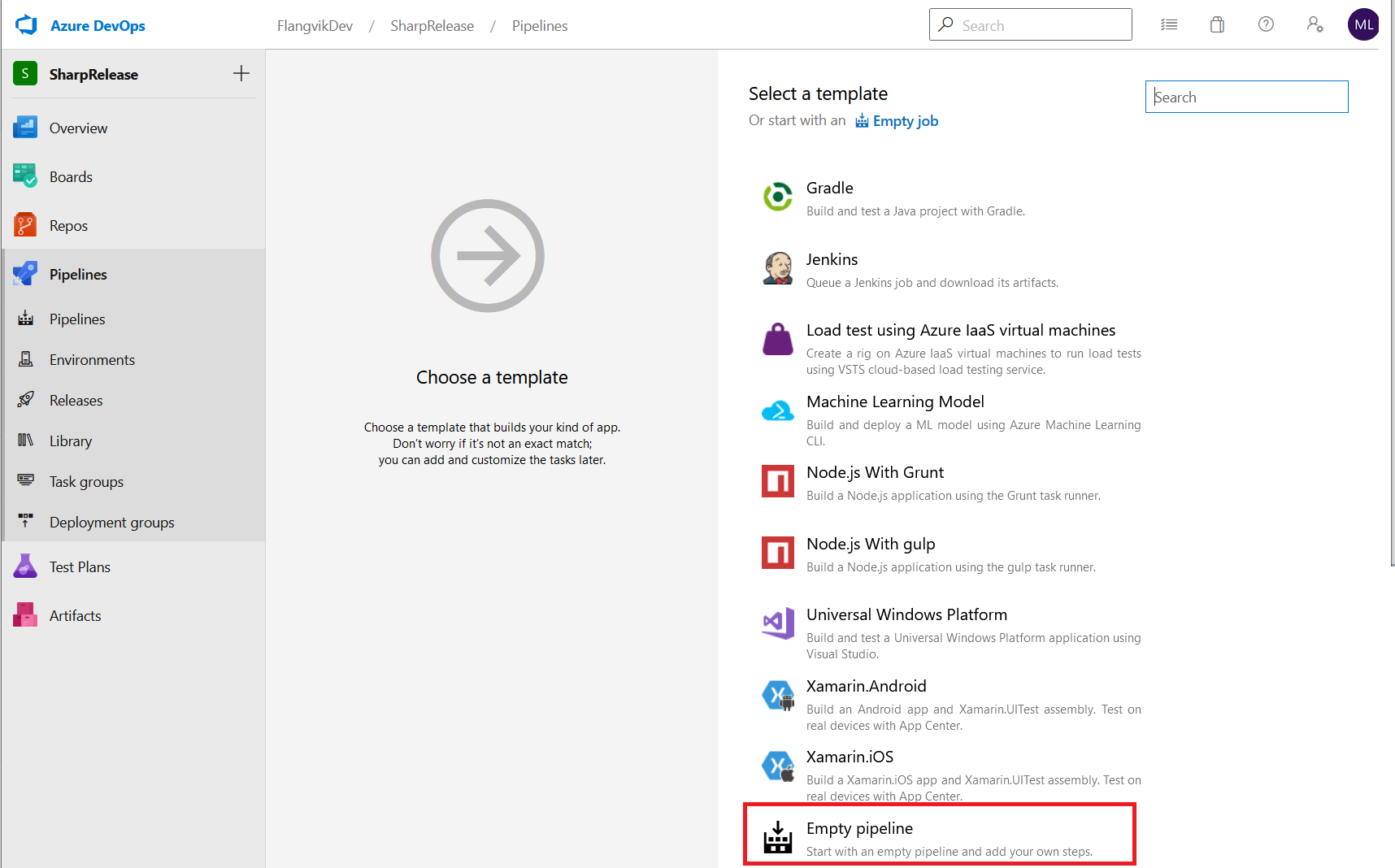

Scroll down until you find “Empty pipeline”, select it and click “Apply”.

-

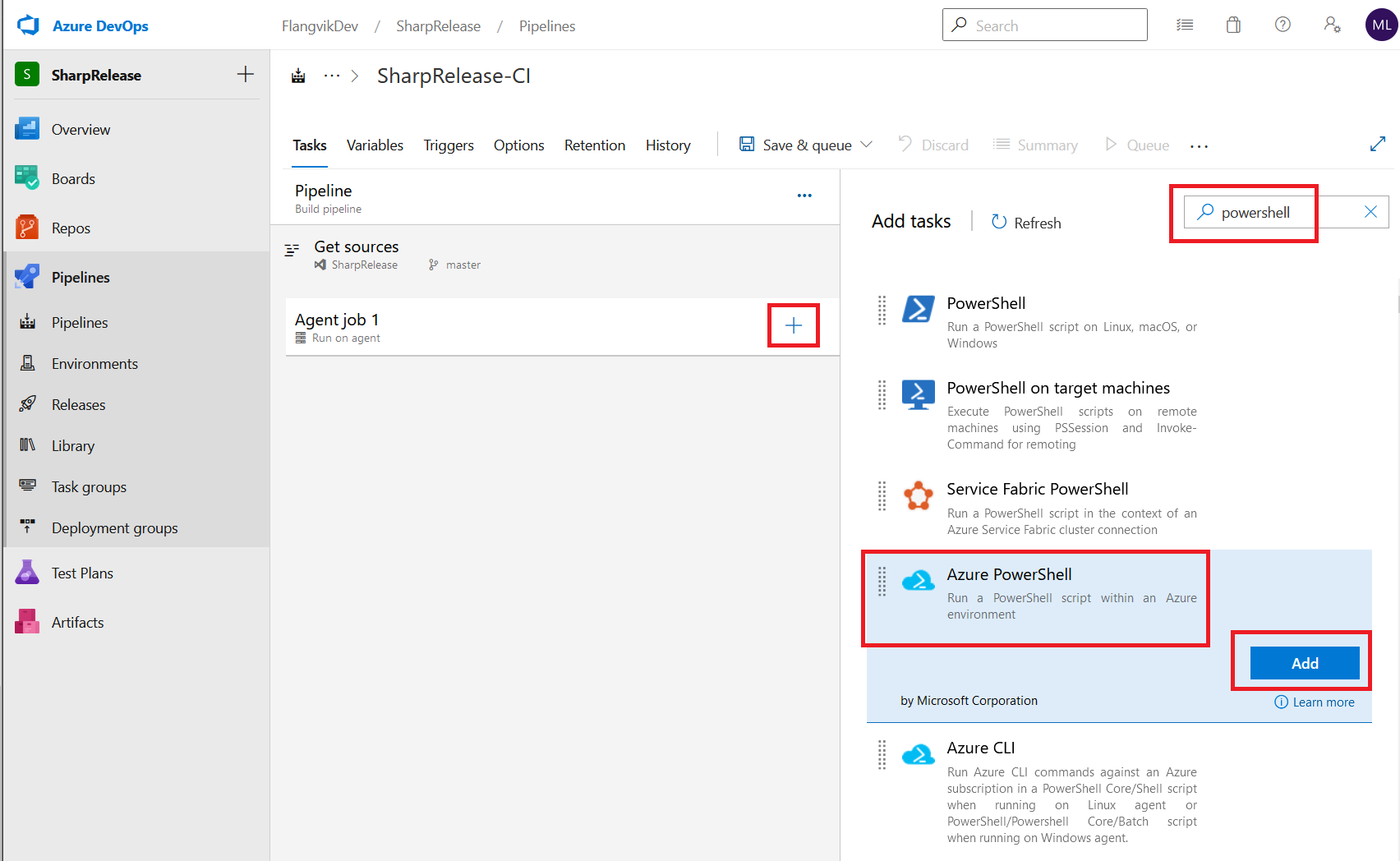

Click the “+” symbol inside Agent job 1, search for “powershell” and select “Azure PowerShell”. Click “Add”

-

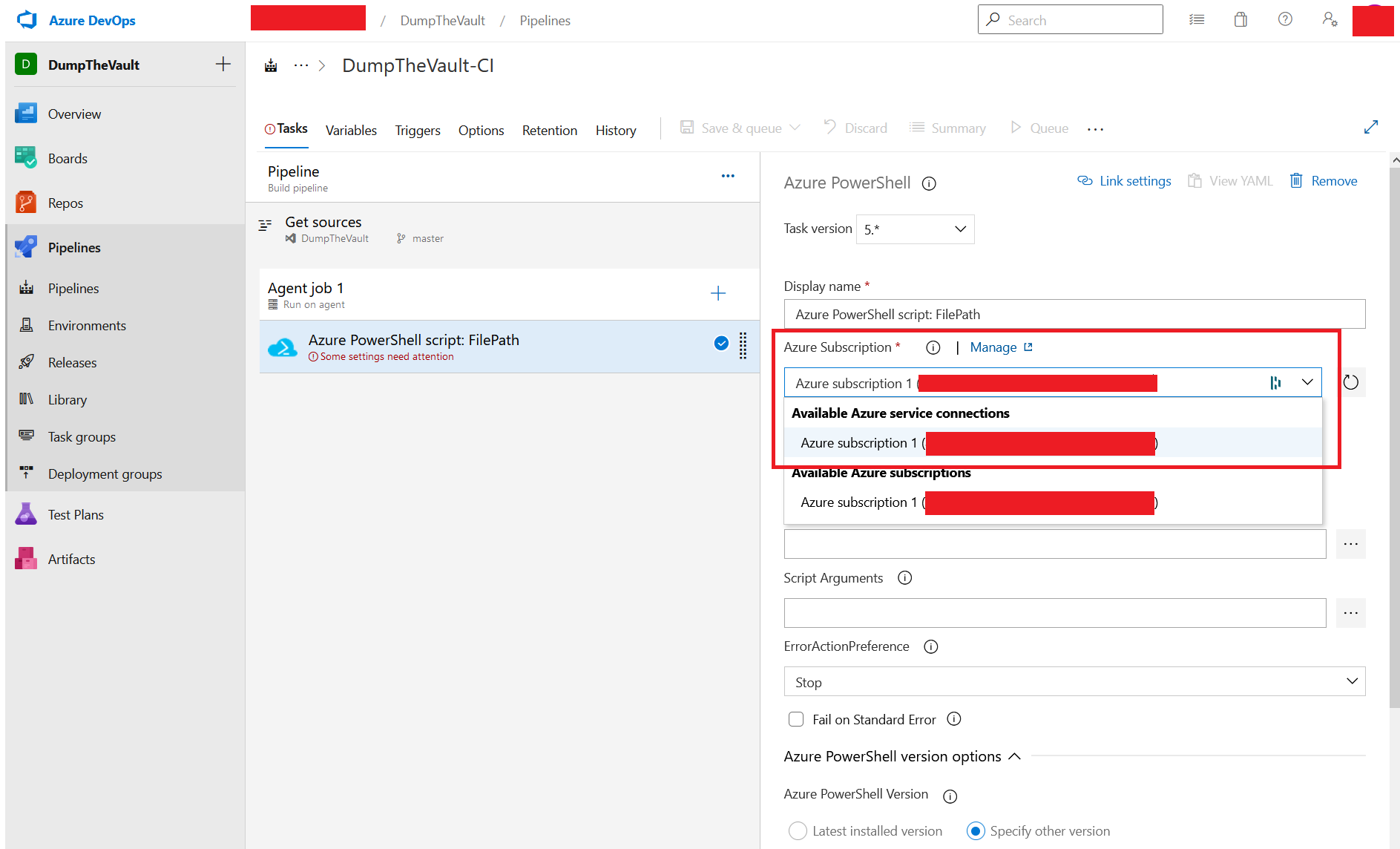

Under “Azure Subscription”, we get a list of possible subscriptions (Over Service Connections) we can connect into. Typically you may see a reference to “DEV, PROD, TEST” here. The common misconfiguration is that every pipeline has access to every “Azure Service Connection” (Thereby subscription). In this example, we only have access to one.

-

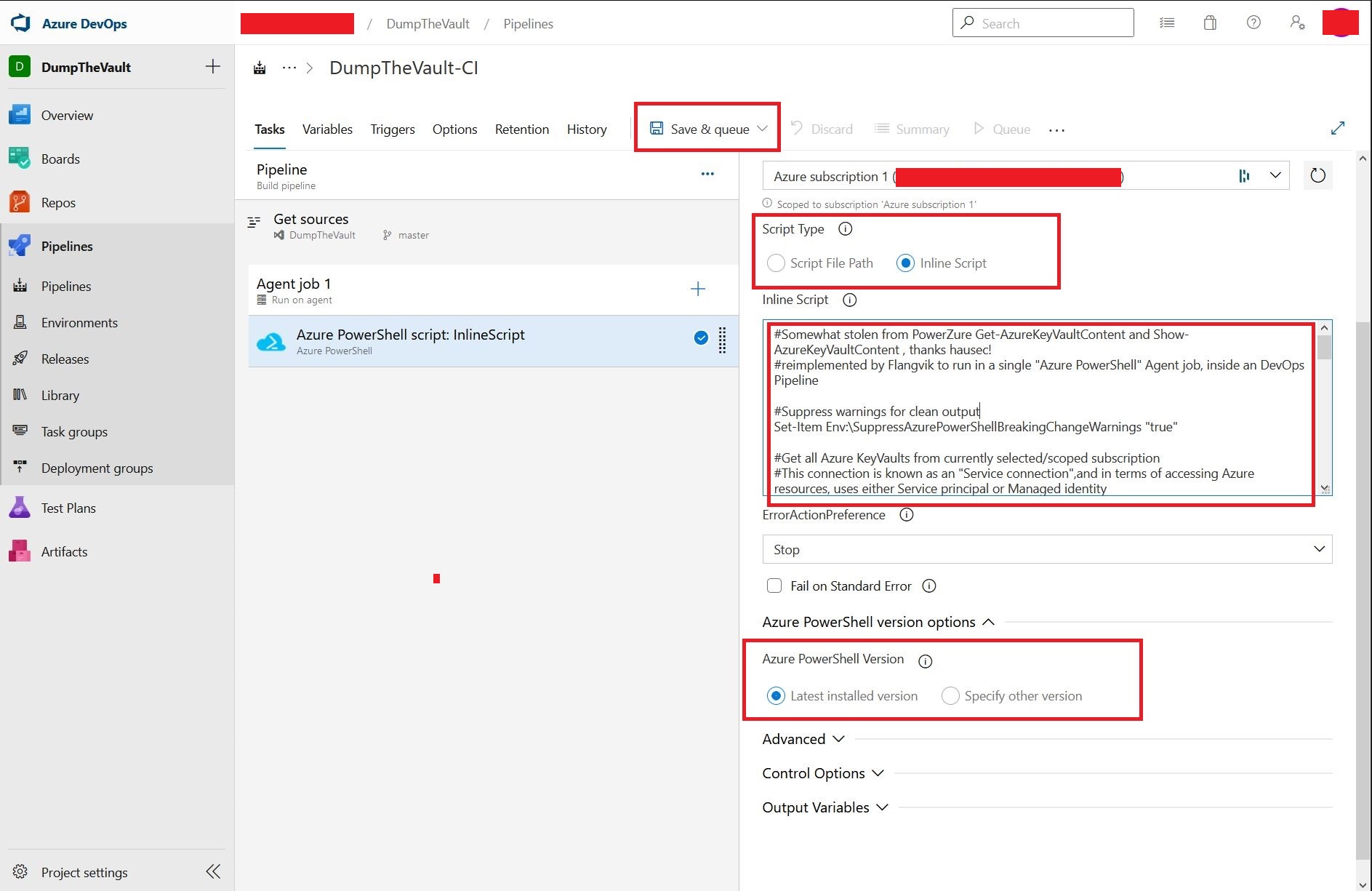

Under “Script Type” select “Inline Script” and copy in our modified PowerZure payload. Further down under “Azure PowerShell Version” select “Latest installed version”.

Everything is now set up. When ready, click “Save and queue”.

The pipeline will now authenticate our module via the Azure Service Connection. Afterward, the PowerShell script will search and dump any KeyVault it can access. Accessible secrets will be dumped into the pipeline log, all unencrypted. Note that the output field “SecretValue” is base64 encoded to bypass Azure DevOps censoring/replacing it with ****.

Leaving secrets from a production environment in logfiles like these is a huge NOPE!!. I would strongly advise adding a level of output encryption to the “SecretValue” field(s) before running this during a real engagement.

Exfiltration

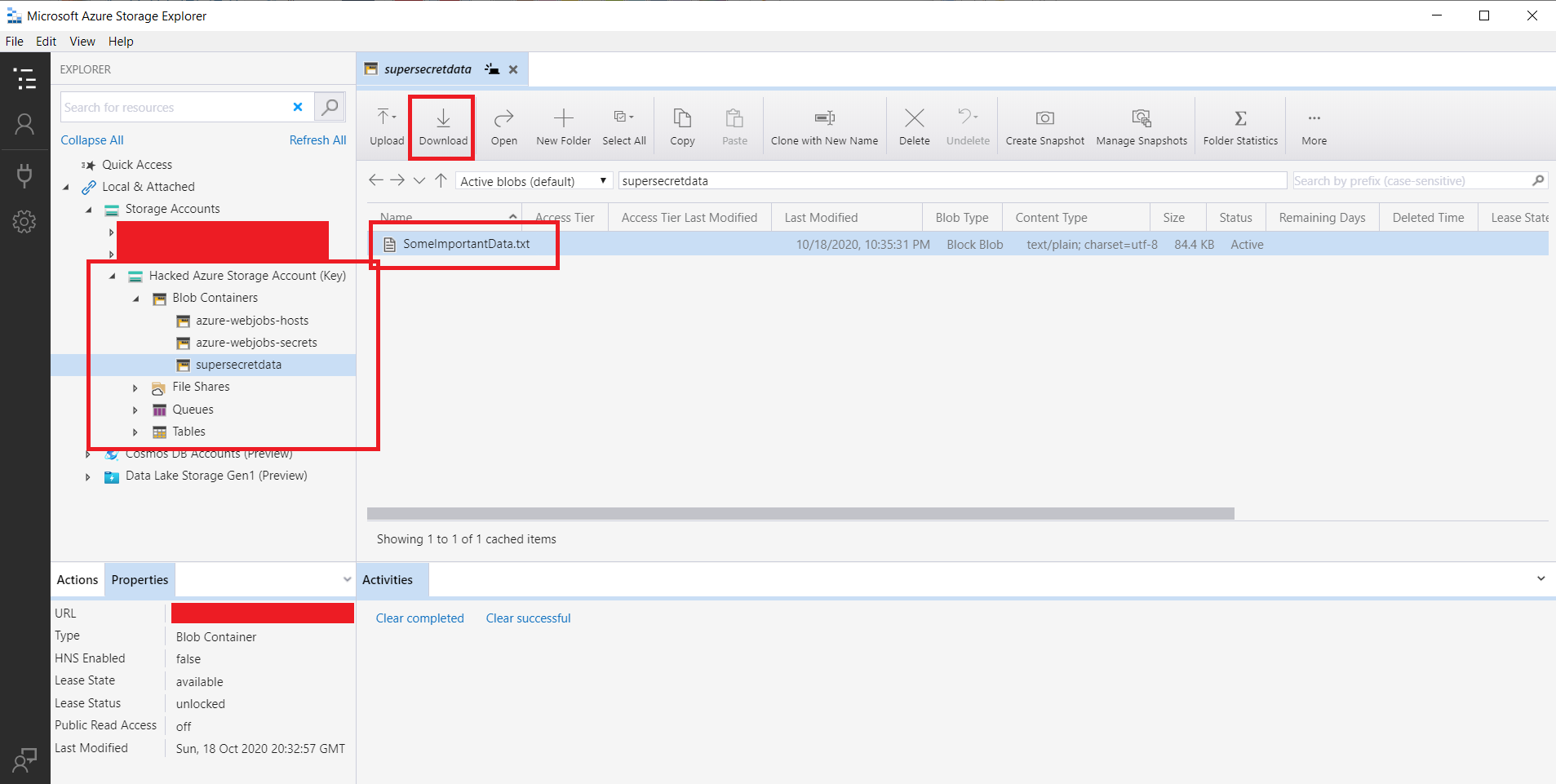

We got the secrets, now what can we do with them? Using tools like “Microsoft Azure Storage Explorer” and “ServiceBusExplorer” we can directly connect to Azure resources using the secrets we dumped.

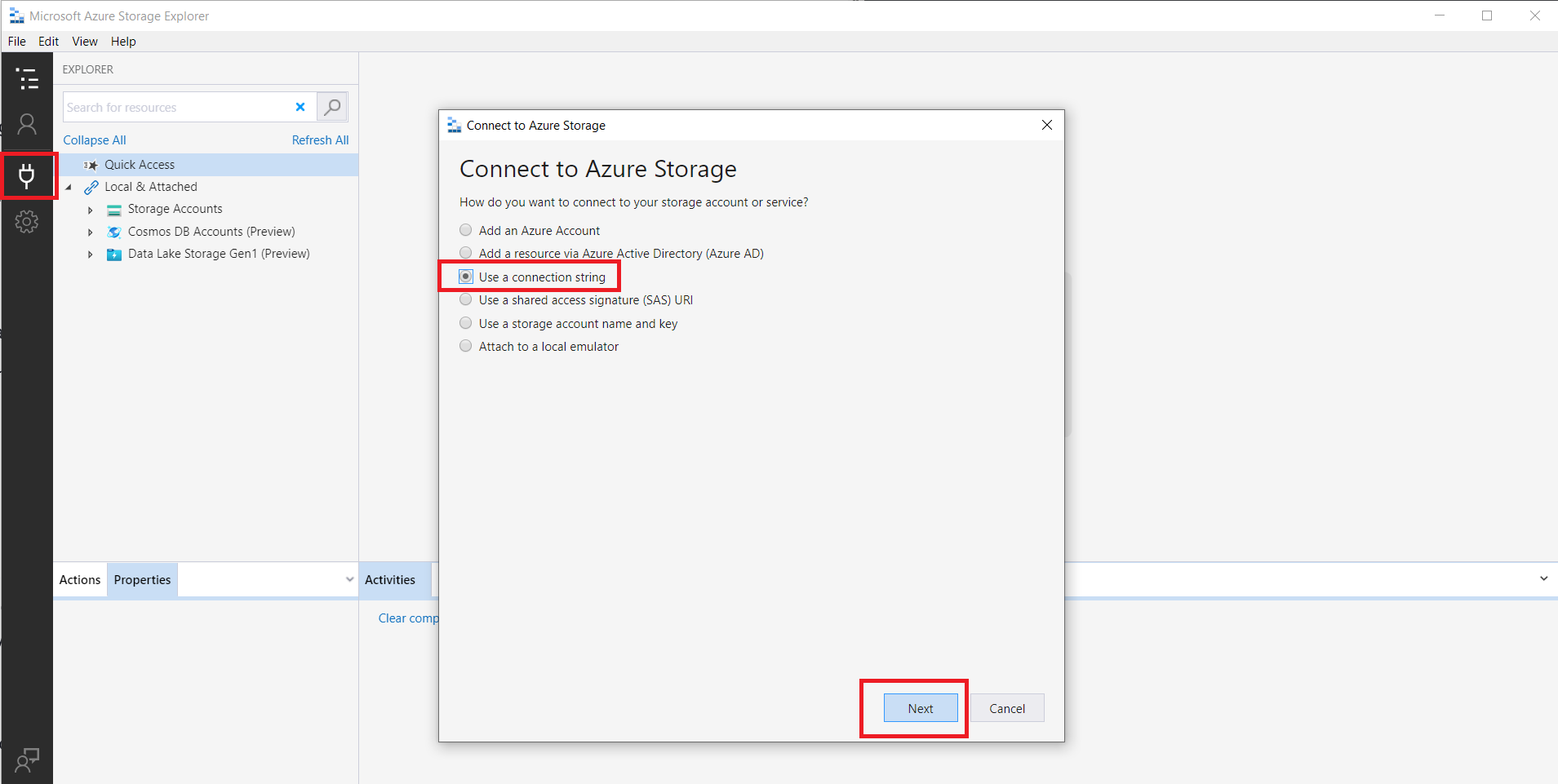

After installing Microsoft Azure Storage Explorer, fire it up and click the “Connect” icon on the left-hand side. Depending on what sort of secrets you managed to dump, select a fitting way to connect to your resource. Commonly this is a “Connection string”. Click “Next”

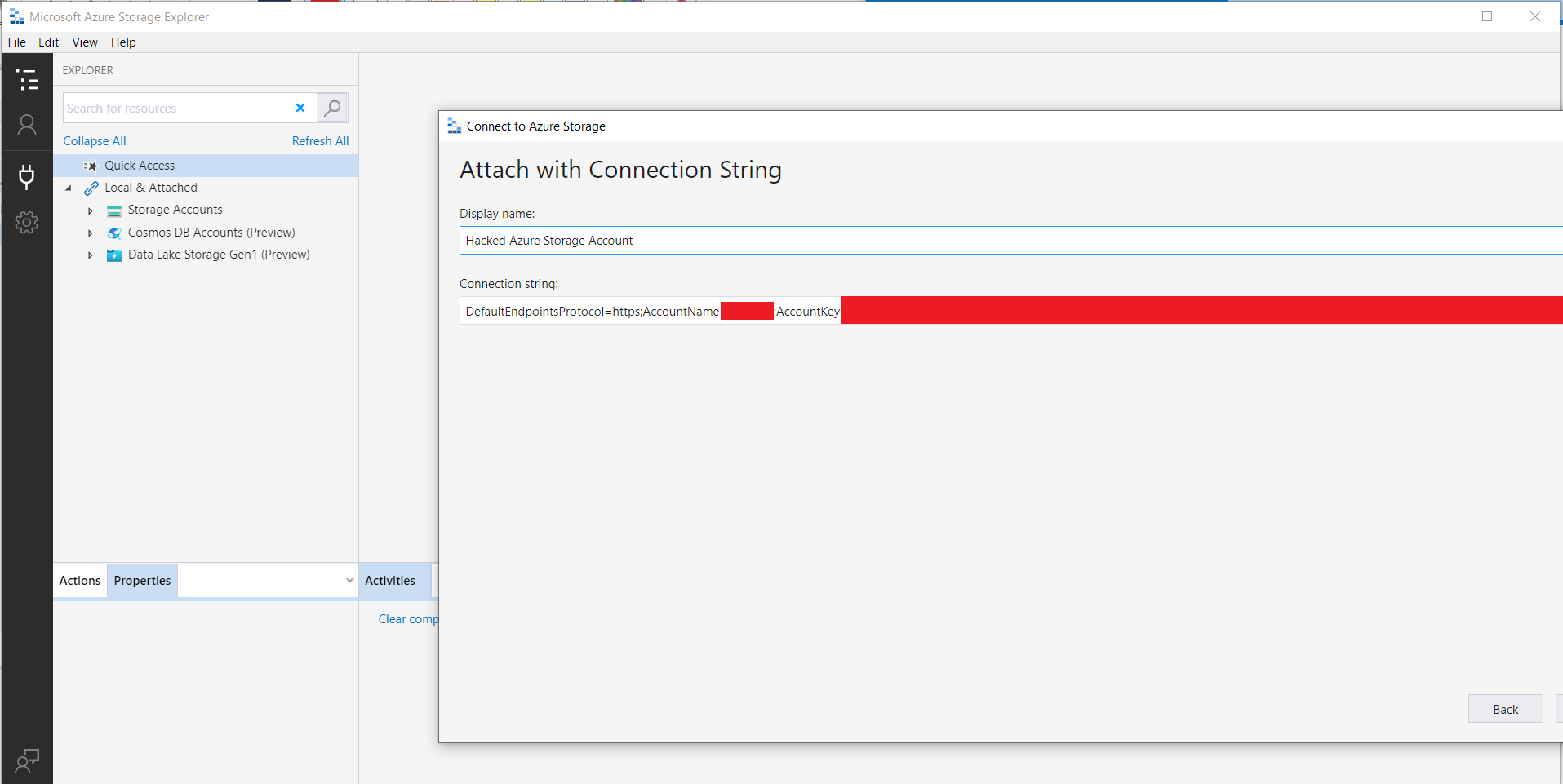

Base64 decode and copy in your connection string, make sure to give your target resource a cringy display name. Click “Next” and then “Finish”

You now have access to the Azure resource and can freely roam tables, file shares, and containers (folders). These steps are very similar for ServiceBusExplore application, enjoy!

Defense

As DebugPrivilege kindly pointed out, Azure KeyVault has excellent logging capabilities. Integrating it with something like Log Analytics can help monitor and alert on suspicious activity.

However, the root cause of this issue is the bad-practiced and often rushed setup and configuration of pipelines within DevOps. Users having write access to all pipelines, all pipelines having access to all Azure Service Connections, and so forth.

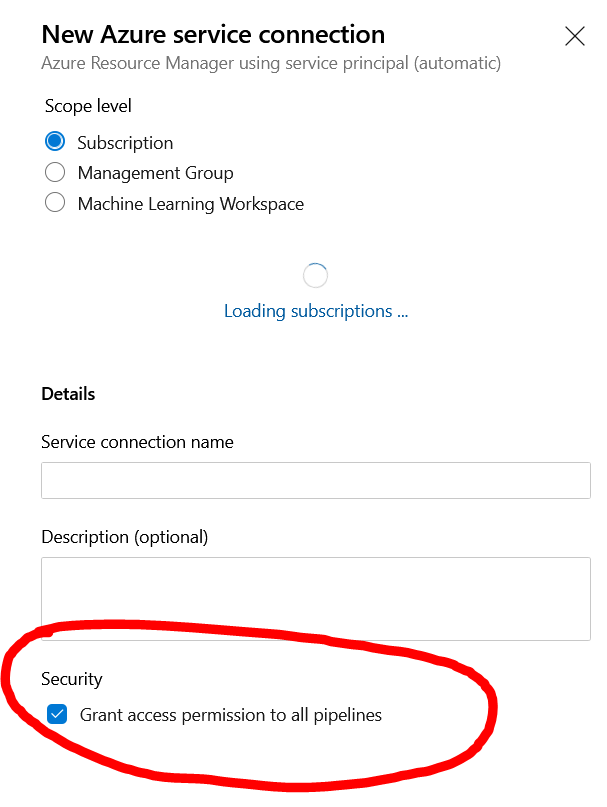

When creating a new service connection, the potentially dangerous “All Access” permission is even set by default! Be sure to uncheck this!

If you have any best-practice guides or input on how to properly deal with identity and access management within DevOps, feel free to reach out to me on Twitter @Flangvik. I would love to add it to the post. The same goes for feedback and suggestions. I’m all ears!